In the olden days, data collection was a nightmare for businesses. I mean, imagine having to go through every single website and collecting relevant data for your business.

Times changed slightly, and we were introduced to the world of screen scraping, something that made the manual labor more accessible but not the IT department came under a dark spell. Identifying and reacting to the live screens for development and host application changes doesn’t sound fun.

But hey, that’s not why we have gathered here today. This article has been written to talk about synchronized modern-day screen scraping tools, so data collection has become as easy as a-b-c.

Before I get further into the topic, let’s first understand what screen scraping is exactly.

What is Screen Scraping?

Screen scraping is the process of gathering screen display data from one application and transferring it to another application. This technique extracts visual data from websites and applications for research purposes.

A simple scraping application pulls data from the source application and parses it into its own view model. This visual data is collected as raw text from the UI elements that appear on any website or application.

Difference Between Screen Scraping and Web Scraping

Screen scraping focuses on the visual data that appears on the screen instead of individual elements of a website. On the other hand, web scraping is all about extracting or parsing individual data on an application or website. While web scraping allows you to extract individual elements of a page like stats, finding email addresses, text, and URL, screen scraping will grab the visual data from the screen, like graphs and charts.

While both of these data scraping techniques involve data extraction from a website or application, they are entirely different from one another.

What is Screen Scraping Used For?

Screen scraping is used in a variety of fields where they provide several uses, such as:

- To translate data from a legacy application to a modern application.

- To track user-profiles and check their online activities.

- To track financial transactions in banking applications.

- To run data aggregators and make website comparisons.

Screen Scraping Use Cases and Examples

Some of the most popular screen scraping examples include:

1. Banking Sector

In banking, lenders use screen scraping to gather their customers’ data. For this purpose, financial-based applications scrape user data and offer powerful insights. However, these applications don’t work unless the users explicitly allow it, trusting the organization with their personal information.

2. Comparing E-commerce Product Pricing

Screen scrapers come in handy when comparing prices between two or more similar products by different retailers or even the same product being sold by different vendors. This is especially useful for intermediaries who sell bulk products and can use the discounted prices to leverage their profits.

3. Upgrading Outdated Technologies

Sometimes, companies have information systems and other applications built on outdated technologies. The problem is that the information available in these legacy applications is critical in day-to-day operations. Screen scraping comes in handy here as it translates the data to new user interfaces. For example, a video podcast might use this technique to create audio versions of videos for people with visual impairment or who are just learning English as second language learners.

4. Performing Website Transitions

Similar to moving legacy applications, screen scrapers are also helpful in making website transitions. Businesses with rather heavy websites are sometimes tasked to transition towards a more modern layout or environment while keeping the data safe. In such cases, screen scraping can be used to easily and quickly export data from the old website to the new one.

Screen Scraping Using Crawlbase

More interesting, though, are the use cases of screen scraping with the help of Crawlbase. Let’s discuss the top five:

1. Crawlbase - Amazon

As the world’s largest e-commerce platform, Amazon is literally a goldmine. If your business requires constant access to Amazon pages, you may find it increasingly difficult to scrape these pages due to persistent roadblocks like captchas and bot detection.

Crawlbase’s Screenshots API is built on top of thousands of quality proxies coupled with the most advanced AI. This API works well with every Amazon page, like product details, offer listings, seller’s information, and reviews.

The neural AI handled each request as accurately as possible. With a response time of just 4-10 seconds, this API ensures that your business can get screen scraping of all Amazon pages efficiently and with no compromises.

2. Crawlbase - GitHub

As the most advanced development platform online, GitHub holds a very invaluable position for developers who maintain and build their applications on this platform. If you are a software company, you’ll definitely need to scrape data from millions of repositories on this platform at some point.

Crawlbase’s Screenshots API ensures that you can stay secure and anonymous at all times while scraping Github pages. Since the API is built on top of thousands of quality residential and data center proxies integrated with Artificial Intelligence, it guarantees security and anonymity with its anonymous proxy for each screen scraping attempt.

3. Crawlbase - Walmart

Hello retailers, we know you need to collect the contact information of potential customers. Well, you may be aware of the biggest retail corporation in America that has a substantial online product database to meet your exact requirement. Yes, we are talking about Walmart!

If you are looking to extract various product information for data mining or other purposes, Walmart’s vast inventory can be of great value. Crawlbase allows you to screenshot all this data and download it without a hassle!

Benefits of Image Scraping

Screen scraping has a lot of benefits to offer. The most notable ones include:

1. Execution Simplicity

Screen scraping tools, once executed, cover the entire domain instead of a single website. This allows the user to get all information at once, from a single source, rather than having to execute the function separately every time.

2. Efficiency

The best thing about screen scraping tools is that they provide an excellent data collection speed. It enables you to rapidly scrape many websites simultaneously without having to watch and control every request.

3. Cost-Effectiveness

Surprisingly, screen scraping is relatively inexpensive. The basic scraping service offers complicated tasks’ resulting in a very low budget. A simple scraper API can often do the whole job without the need to invest in extra staff or complex machinery.

4. Accuracy

Screen scraping is not just efficient and cost-effective; it is also accurate. The data collected from websites is brought in with precision and accuracy while ignoring the noise.

5. Maintaining Data Quality

Apart from top screen scraping benefits discussed earlier, it also enables businesses to automate their repetitive data transfer processes while ensuring data quality and reducing data processing time. This is especially important because data collection and transformation are prone to duplicates and typos. Screen scraping, by Crawlbase, can achieve 100% accuracy in data collection from different applications in under 10 seconds.

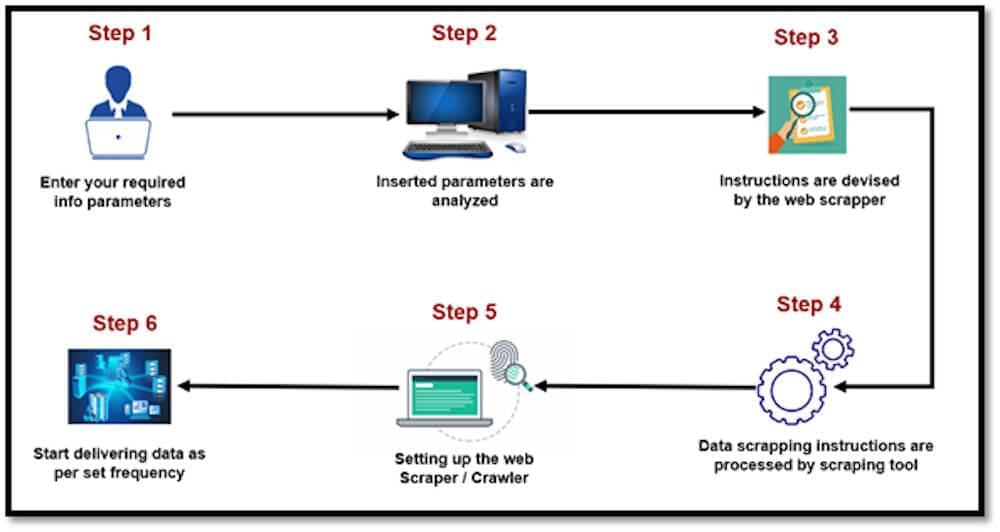

Implementing Screen Scraping

Before we get to the implementation part, let us first describe how screen scraping works. These tools are scripted to search for specific UI elements and extract data from them, usually in the form of spreadsheets. The extracted data is then transferred to a readable file format, like JPEG or PDF, making it easy to apply pdf tweaks online for further customization or analysis.

In many cases, screen scraping tools also leverage OCR to transform the extracted data into machine-readable text before transforming it into a designated file format.

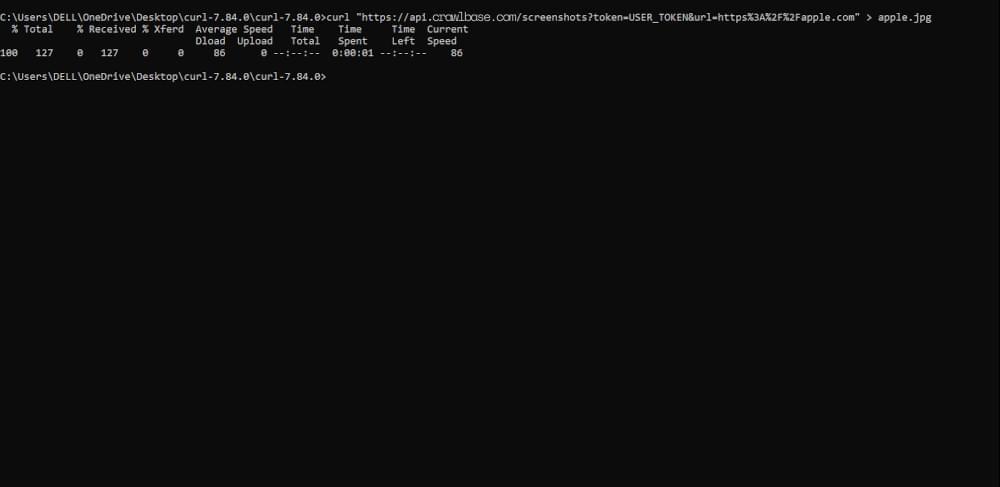

Implementing the Screenshots API on cURL for mainframe screen scraping is pretty simple:

- Download cURL from https://curl.se/download.html

- Go to the Start menu on your system and open the ‘Run’ program

- From there, run cmd and open the directory where cURL is installed.

- Start running your commands and calling API from here.

You can try the following as the first command: curl

https://api.crawlbase.com/screenshots?token=TOKEN&url=https%3A%2F%2Fapple.com

Alternatively, you can do ruby or python screen scraping as well. Detailed documentation for this product is provided here.

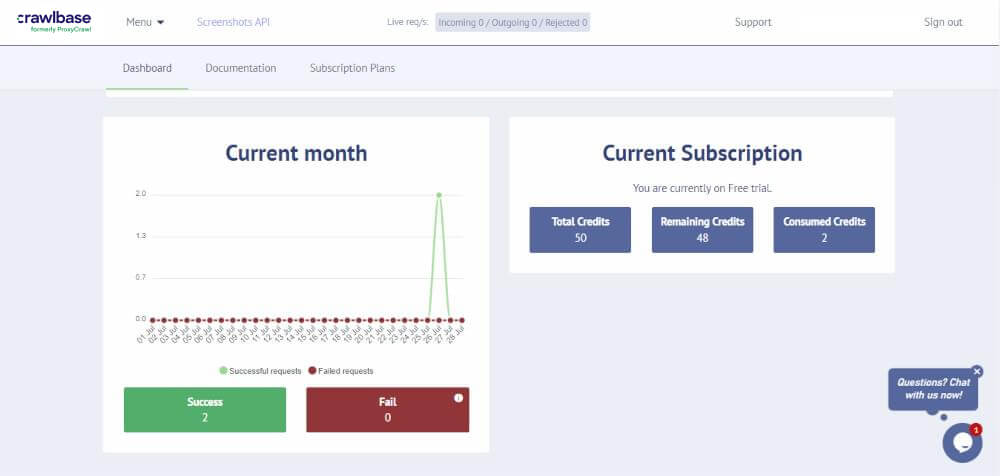

Please note that our screen scraping software’s results will appear on your dashboard.

Automate Screen Scraping with Crawlbase

As a business, you need screen scraping for useful data collection. However, this work takes a lot of time and effort when done manually. Instead, your business can take help from Crawlbase’s Screenshots API.

This automated Screenshots API allows users to take screenshots of websites and keep track of the visual changes on all pages you crawl. This API uses the latest Chrome browsers to take screenshots of any website on any screen resolution functionally.

The best part about this API is its anti-bot detection feature; Screenshots API bypasses blocked and CAPTCHA pages. It takes screenshots error free from different locations worldwide.

Final Words

This tech focused era requires tons of data collection; that is where screen scraping comes in handy. It helps you comb through tons and hundreds of websites that are later processed to convert the data into an easy-to-use format.

Of course, screen scraping implementation can be via a code-based solution, manual labor, or scraping tool utilization. The end result’s quality is based on the method you choose. Crawlbase’s Screenshots API is one of the best ones in the market that allows your web crawler to capture data images and use the data to generate valuable insights.

Screen scraping uses are endless, and if you, as a business owner, wish to thrive in this ever-changing market, you need to get your hands on a dependable screen scraping tool.

Because data quality counts.