Direct Answer: To scrape Google AI Mode in 2026, you should avoid browser automation and instead treat it as a structured data extraction problem. Build a Google search URL with udm=50 parameter (AI Mode), send it to the Crawlbase Crawling API using a regular token with format=json, optionally include scraper=google-serp, then parse and normalize the response into stable fields like response_text, citations, and links. This approach gives you reliable, machine-readable output without managing headless browsers, proxies, or UI-level parsing.

Google’s AI Mode is changing how search results are presented. Instead of returning a list of links, it generates a direct answer supported by multiple sources, blending summaries with citations and related content.

For developers and SEO teams, this opens up a different kind of dataset. You are not just collecting rankings anymore, but actual answers tied to queries, along with the sources behind them.

This guide walks you through how to set up a Python pipeline using Crawlbase Crawling API to fetch, parse, and organize AI Mode results into JSON that you can store, compare, and plug into analytics or content workflows.

For a complete, production-ready implementation, see the project repository on ScraperHub: ScraperHub/how-to-scrape-google-ai-mode-in-2026

Jump to:

- How Google AI Mode works

- Why not scrape the UI

- Crawlbase setup

- What data to extract

- Step-by-step guide

- App integration

- Response structure

- Troubleshooting

- Key takeaways

- FAQ

How Google AI Mode Works for Web Scraping

Google’s AI Mode is built for real users, not scrapers. The interface is dynamic, with content loading progressively and changing based on interaction. Trying to extract data directly from the UI quickly becomes unreliable.

For scraping, the more stable approach is to focus on two things: the URL that triggers AI Mode and the data returned behind the scenes. Instead of dealing with layout changes or timing issues, you work with a predictable request and a structured response.

In this guide, the sample project builds Google AI Mode URLs using udm=50, along with standard parameters like q, gl, and hl, and optionally uule for location targeting. The implementation is simple as shown below.

1 | """Build Google Search URLs for AI Mode (udm=50).""" |

Source: google_ai_mode/google_ai_mode_url.py

This function acts as the entry point of the pipeline. You pass in a query and get a consistent AI Mode URL in return. From there, the rest of the workflow is straightforward: send the request through Crawlbase, then normalize the response into structured data your system can use.

Why You Should Avoid Scraping the Google AI Mode UI

You can scrape AI Mode by automating a browser, but it comes with trade-offs.

Once you go down that route, you have to deal with rendering delays, timing issues, and selectors that break whenever Google updates the interface. On top of that, there is bot detection to manage and the overhead of running and maintaining browser instances at scale.

It works for small setups, but it becomes fragile and expensive as you grow.

A JSON-first approach simplifies the entire flow. Instead of reproducing user interactions, you reduce it to:

request → response → parse

No browser layer, no UI dependencies, with far fewer points of failure.

How Crawlbase Helps You Scrape Google AI Mode

Crawlbase handles the data acquisition layer. It is not just forwarding requests. It takes care of fetching the page, dealing with blocking, and returning a structured response you can work with.

In this setup, the HTTP client stays intentionally simple. You send a GET request to https://api.crawlbase.com/ with a few parameters: token, url, and format=json. You can also include scraper=google-serp if you want Crawlbase to apply page-specific parsing. The sample CLI uses this by default unless you disable it.

The implementation from the sample project looks like this:

1 | """Minimal Crawlbase Crawling API client.""" |

Source: google_ai_mode/crawlbase_client.py

At this point, you are no longer dealing with browser state or raw HTML. You receive structured JSON that includes the page content and metadata, which can go straight into your parser.

One important detail to keep in mind: even when you request an AI Mode URL, the google-serp scraper may return a more traditional SERP-shaped JSON. That is expected. The sample normalizer is designed to handle both formats.

This is what makes the setup practical. You are not tightly coupled to one response format, and you do not need to constantly chase UI changes.

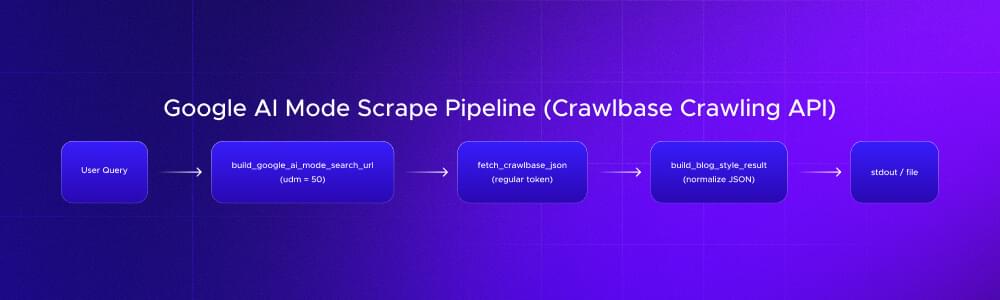

At a high level, the pipeline looks like this:

You start with a query, convert it into an AI Mode URL, send it through Crawlbase, then normalize the JSON into structured output. From there, the data can be written to a file, stored, or passed into downstream systems.

What Data to Extract from Google AI Mode Results

Once you have the JSON response, the next step is deciding what data actually matters. You are not trying to capture everything in the payload. You want a small set of fields that are stable and useful.

In this setup, the output is normalized into three core fields:

| Data | Field |

|---|---|

| Summary text | results[0].content.response_text |

| Citations (URL + snippet) | results[0].content.citations |

| Reference links | results[0].content.links |

These map directly to how AI Mode works. You get a generated answer, a set of sources backing that answer, and a broader set of links related to the query.

The extraction logic is handled in the normalizer. Instead of relying on fixed keys, it looks for multiple possible fields and falls back when needed. This is important because the response shape can vary depending on how Crawlbase or Google structures the payload.

Here is the core extraction function:

1 | def extract_content_fields(parsed_body: Any) -> dict[str, Any]: |

Source: google_ai_mode/normalize.py

This approach keeps your parser flexible. It does not assume a single response format, and it continues to work even when the payload shifts between AI-style responses and more traditional SERP structures.

response_textis the generated answer you can analyze or displaycitationsare the sources backing that answerlinksgive you the broader set of related results

If you are building dashboards or pipelines, this structure is enough to support most use cases without over complicating your schema.

Step-by-Step Guide to Scrape Google AI Mode in 2026

The fastest way to get started is to run our sample project locally. It handles URL generation, Crawlbase requests, and normalization out of the box.

You will need the latest version of Python (3.10 or higher) installed to run this project, along with a Crawlbase account and a regular Crawling API token.

Step 1: Clone the repository

1 | git clone https://github.com/ScraperHub/google-ai-mode-scraper.git |

This gives you the full working implementation, including the CLI and parsing logic.

Inside the repository, the actual code lives in the code directory. Move into it:

1 | cd google-ai-mode-scraper |

You should now see:

requirements.txt.env.examplegoogle_ai_mode/

All remaining steps should be run from this directory.

Step 2: Set up a virtual environment

Set up an isolated Python environment so dependencies do not conflict with your system packages:

1 | python -m venv .venv |

Activate it:

- Windows (PowerShell)

1 | .venv\Scripts\Activate.ps1 |

- macOS / Linux

1 | source .venv/bin/activate |

Once activated, your terminal should show (.venv) indicating that Python and pip are scoped to this project.

Step 3: Install dependencies

Install the required Python packages:

1 | pip install -r requirements.txt |

This installs everything needed to:

- call the Crawlbase Crawling API

- parse responses

- run the CLI tool

Step 4: Configure your Crawlbase token

Copy the environment template:

1 | cp .env.example .env |

Windows:

1 | copy .env.example .env |

Open the .env file and set your token:

1 | CRAWLBASE_REGULAR_TOKEN=your_token_here |

Make sure:

- you are using the regular token (Non-Browser-Enabled API Key), not the JavaScript token (Browser Enabled API Key)

- the file is saved in the same directory as

requirements.txt

The project uses python-dotenv, so this value will be loaded automatically when you run the script.

Step 5: Run the scraper

With everything set up, run the CLI:

1 | python -m google_ai_mode "your search query" |

Example:

1 | python -m google_ai_mode "best ai tools for developers" |

What happens here:

- the query is converted into an AI Mode URL

- Crawlbase fetches the data

- the response is normalized into structured JSON

The result is printed directly in your terminal.

Step 6: Save output to a file

If you want to store the result instead of just printing it:

1 | python -m google_ai_mode "your query" > output.json |

This writes the full JSON response to output.json, which you can inspect or load into other tools.

Step 7: Run without passing a query (optional)

You can define a default query in .env:

1 | GOOGLE_AI_MODE_QUERY=your query here |

Then run:

1 | python -m google_ai_mode |

This is useful for testing or scheduled runs where you do not want to pass arguments each time.

Step 8: Adjust parameters

The CLI exposes a few options to control the request:

| Option | What it does |

|---|---|

--gl | Sets the country (default: us) |

--hl | Sets the language (default: en) |

--no-scraper | Disables scraper=google-serp |

Example:

1 | python -m google_ai_mode "ai seo tools" --gl uk --hl en |

This lets you test how results change across regions or configurations.

Visit the README page for the complete instructions: https://github.com/ScraperHub/google-ai-mode-scraper

How to Integrate Google AI Mode Scraping Into Your App

If you prefer integrating this into your own code instead of running the CLI, the project exposes a single high-level function.

The orchestration logic lives in google_ai_mode/google_ai_mode_scrape.py, but you only need to import one function:

1 | from google_ai_mode import scrape_google_ai_mode |

This call handles the full pipeline:

- builds the AI Mode URL

- sends the request through Crawlbase

- parses and normalizes the response

The function automatically loads CRAWLBASE_REGULAR_TOKEN from .env if available, or falls back to your environment variables.

The result is the same structured JSON used throughout this guide, including response_text, citations, and links, so you can plug it directly into your application without additional parsing.

Understanding the Google AI Mode JSON Response Structure

The response follows a consistent structure, with a results array containing a single item. Most of the data you need lives inside that object.

Key fields include:

results[0].content→prompt,response_text,citations,links,parse_status_coderesults[0].url→ the AI Mode URL that was requestedresults[0].status_code,pc_status,crawl_url,token_used,scraperresults[0].raw_body_preview→ a short preview of the raw response for debugging

You will spend most of your time working with response_text, citations, and links.

If you are building dashboards or pipelines, keep status_code and pc_status alongside your extracted fields. This makes it easier to tell whether an issue comes from your parser or from the fetch layer.

Common Issues When Web Scraping Google AI Mode (and Fixes)

Scraping Google surfaces is not something you set up once and forget. Payloads change, response shapes shift, and your parser needs to be flexible enough to handle that.

The most common issues you will run into are straightforward:

- Missing token errors

Make sureCRAWLBASE_REGULAR_TOKENis set in.envor your environment, and that you are running the script from the correct directory so it can be loaded properly - 401 or Crawlbase request errors

Double-check that you are using a regular Crawling API token and that your account has available credits. Review Crawlbase response codes to understand various API error codes. - Incomplete or unexpected output

Ifresponse_textlooks empty orcitationsseem off, inspectraw_body_previewand the full response body. Both Google and Crawlbase payloads evolve, so your parser may need adjustments in google_ai_mode/normalize.py

When results suddenly drop or look different, compare recent outputs with previous ones, especially the raw_body_preview. That usually tells you whether the issue is in your parsing logic or upstream in the response.

Key Takeaways for Scraping Google AI Mode

Google AI Mode shifts scraping from extracting links to working with structured answers, citations, and context. Instead of relying on fragile UI automation, you can build AI Mode URLs, fetch JSON through Crawlbase, and normalize the response into fields you can actually use.

This approach keeps the pipeline simple and stable. It also makes the data immediately usable for tracking answer changes, analyzing cited sources, and feeding into SEO or internal workflows.

If you want to try this yourself, start with the sample project and run it locally. Create a Crawlbase account, get your regular token or API key, add it to your .env, and run a few queries. Within minutes, you will have structured AI Mode data ready to store, compare, and build on.

Frequently Asked Questions

What is udm=50?

udm=50 is a Google search parameter that triggers AI Mode in the search results. When included in the query URL, it returns the AI-generated response layer instead of the traditional list of links.

For example:

1 | https://www.google.com/search?q=web+scraping&udm=50&gl=us&hl=en |

Opening this URL in a browser loads the AI Mode version of the results for the query “web scraping”.

Does Crawlbase support Google AI Mode?

Yes. Crawlbase can fetch Google AI Mode results by requesting the AI Mode URL and returning the response as structured JSON. While the google-serp scraper may sometimes return a traditional SERP-shaped payload, the data can still be normalized into fields like response_text, citations, and links using the approach shown in this guide.

What token type do I need?

You need a regular Crawling API token (Non-Browser-Enabled API Key), not a JavaScript token (Browser Enabled API Key). The setup in this guide relies on Crawlbase handling the request and returning JSON directly, so there is no need for browser rendering.