Direct Answer: Crawlbase integrates with LangChain as a specialized tool within an agentic workflow, enabling real-time web data retrieval during execution. This allows LLMs to fetch, process, and use live web content, producing grounded responses instead of relying solely on static training data.

To use Crawlbase with LangChain, you integrate it as a tool inside a LangChain or LangGraph agent so your model can fetch real-time web data during execution.

This guide’s goal is to give you a working implementation you can run, test, and iterate on.

Instead of relying only on pre-trained knowledge, the agent can decide when it needs fresh or external information, call Crawlbase, process the response, and use that data to generate grounded outputs.

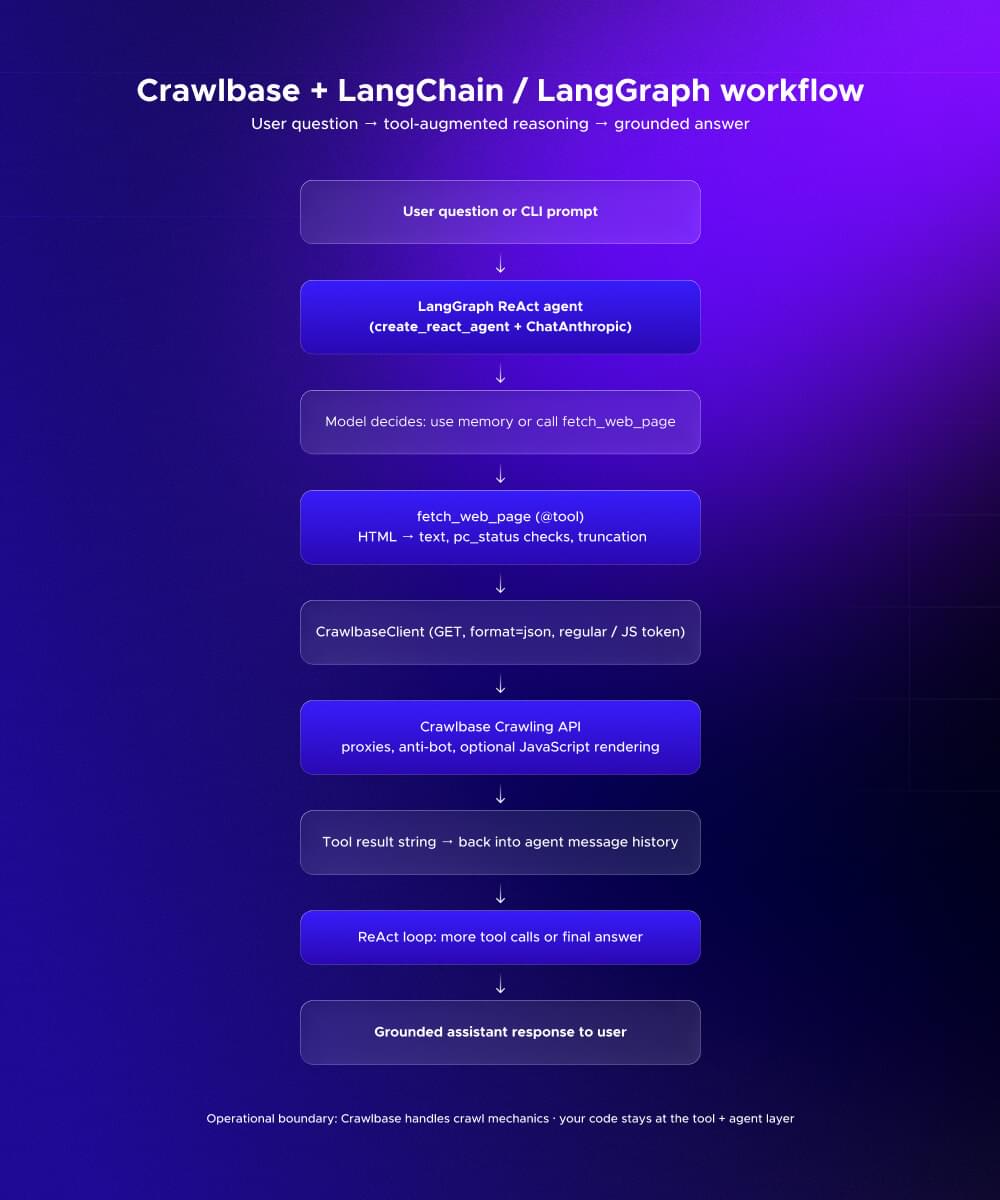

This means wrapping Crawlbase’s Crawling API as a LangChain tool, registering it in a ReAct-style agent, and letting the model choose when to fetch data versus when to answer directly. The result is a simple but powerful pipeline:

1 | user query → agent reasoning → Crawlbase fetch → structured text → grounded response |

Crawlbase handles proxy rotation, blocking, and JavaScript rendering. LangChain handles orchestration. The model focuses on reasoning.

If you want to follow along with a complete, runnable project, you can clone it here: ScraperHub/how-to-use-crawlbase-with-langchain-for-ai-data-pipelines

Jump to section

- Why Use Crawlbase for LangChain Data Pipelines?

- Architecture: The Flow of a Grounded AI Agent

- Implementation: Setting Up the Project

- How to Know It’s Working

- Frequently Asked Questions

Why Use Crawlbase for LangChain Data Pipelines?

A LangChain agent is an LLM-powered system that can decide what actions to take instead of just generating text. It doesn’t just answer questions. It can choose to call tools, fetch data, or perform multi-step reasoning based on the user’s input.

The moment you give an agent that kind of freedom, you run into a practical issue. It needs access to real data, and the web is the obvious source. That’s also where things usually start breaking.

Standard scraping approaches quickly become a problem due to blocking, dynamic content, and scaling challenges. Crawlbase solves these at the infrastructure level so your agent logic stays clean.

Instead of managing proxies, retries, or headless browsers, your agent simply calls a tool and receives structured output.

This enables a more robust system where:

- The agent works with clean, readable text instead of raw HTML

- JavaScript-heavy pages can be fetched when needed

- Failures are surfaced as structured signals, not silent errors

- You avoid maintaining a separate scraping infrastructure

More importantly, it improves the quality of your outputs.

Without real data, the model is guessing based on what it already knows. With Crawlbase in the loop, it can fetch current information and base its response on something concrete. That’s what turns a generic answer into something you can actually rely on.

Architecture: The Flow of a Grounded AI Agent

At a high level, this system is built around three layers, each with a very specific job.

- CrawlbaseClient handles the actual web requests. It talks to the Crawlbase Crawling API, switches between regular and JavaScript tokens when needed, and returns structured responses.

- fetch_web_page tool sits in the middle. It takes the raw HTML from Crawlbase, cleans it up, and turns it into readable text that the model can work with.

- LangGraph agent is the decision-maker. It looks at the user’s query and decides whether it needs to fetch data or can answer directly.

The flow looks like this:

When a user sends a query, the agent first tries to reason through it. If the answer requires fresh or external data, it calls the fetch_web_page tool.

That tool then sends a request through Crawlbase, which handles all the messy parts, such as proxies, blocking, and JavaScript rendering. Once the page is retrieved, the response is structured data.

From there, the tool strips out the HTML, cleans the content, and trims it down so it fits within the model’s limits. That cleaned text is passed back to the agent, and the model uses it to generate a grounded answer.

The key idea here is the separation of concerns.

The model focuses on reasoning. The tool focuses on preparing usable data. Crawlbase handles everything related to accessing the web.

Because each layer has a clear role, the system is easier to maintain and scale. You can change how the agent thinks without touching how data is fetched, and vice versa.

Implementation: Setting Up the Project

Now, let’s walk through this the same way you would actually set it up on your machine.

Step 1: Prerequisites

Before you start, make sure you have the following:

- Python 3.11 or newer, since it aligns well with modern LangChain and LangGraph setups.

- Crawlbase tokens: Use the regular token for most HTML pages, and keep a JavaScript token ready for sites that rely on client-side rendering.

- An Anthropic API key for the sample agent. If you plan to use a different provider later, the overall pattern stays the same.

Step 2: Clone the repository

In your terminal, run:

1 | git clone https://github.com/ScraperHub/how-to-use-crawlbase-with-langchain-for-ai-data-pipelines.git |

This downloads the project into a new folder and gives you a complete, working project with all components already wired together.

Step 3: Configure environment variables

Create a .env file in the project root:

1 | CRAWLBASE_REGULAR_TOKEN=your_token |

These credentials allow the agent to fetch web data and generate responses.

Step 4: Install dependencies

1 | pip install langgraph langchain langchain-core langchain-anthropic httpx python-dotenv pydantic pytest |

Using a virtual environment is recommended if you’re managing multiple Python projects.

Optional: quick smoke test

Before wiring this into LangChain, it’s a good idea to confirm your token works.

You can run a simple live test like this:

1 | """Optional live smoke test against Crawlbase (no mocks). |

This step isn’t required, but it helps you isolate issues early. If this works, you know your Crawlbase setup is correct before adding the agent on top.

Step 5: Run the project

Now you can run the agent. From the same folder:

1 | python main.py "latest AI news today" |

Or pass input via stdin:

1 | echo "summarize https://example.com" | python main.py |

When you execute the command, you’re triggering a full agent loop.

Your query is passed to the LangGraph agent, which decides whether it can answer directly or needs external data. If it does, it calls the fetch_web_page tool.

That tool sends a request to Crawlbase, retrieves the page, converts it into clean text, and returns it to the agent. The model then uses that data to produce a grounded response.

This is the core behavior you’re building: an agent that can decide when to fetch real-time information and use it effectively.

For a complete breakdown of the project structure and setup options, see the README.

How to Know It’s Working

Once everything is set up correctly, the output should feel noticeably different from a standard LLM response.

If you ask about recent events, the answer should reflect current information. If you pass a specific URL, the response should clearly use content from that page.

You’ll also notice the model behaves differently depending on the query. Sometimes it answers immediately. Other times, it decides to fetch data first.

If something isn’t working, the output usually tells you why. Missing tokens, blocked pages, or JavaScript-heavy sites will surface as readable messages instead of silent failures.

These aren’t problems with your setup. They’re signals that your system is reacting to real-world conditions.

Conclusion: From Static LLMs to Live Data Agents

Integrating Crawlbase with LangChain turns your LLM from a static responder into a system that can access and verify real-time information.

Instead of relying on outdated knowledge or guessing, your agent can fetch live content, adapt to changes, and produce grounded answers.

This pattern becomes essential as soon as your application depends on fresh data, whether it’s news, pricing, documentation, or competitive intelligence.

Create a Crawlbase account, generate your tokens, and drop them into the project. You get 1,000 free requests to test real queries against a real pipeline before you commit to anything

Frequently asked questions

When should use_javascript be true?

Use it when the content you need isn’t present in the initial HTML and is rendered client-side. This is common with modern frontend frameworks like React or sites that load content dynamically after page load.

In this setup, the model is guided by the system prompt to decide when to enable this. When use_javascript=true, Crawlbase automatically switches to your JavaScript token.

What if a site blocks crawlers?

When a site blocks crawling, Crawlbase returns a non-200 pc_status, and your tool surfaces that as a readable message instead of failing silently.

From there, the agent can adapt. It might try the same URL using JavaScript rendering, switch to a different source, or adjust its response based on what it knows. At the product level, it’s also worth planning for fallback strategies, such as pointing users to official APIs or handling edge cases manually when needed.

How do I scale this beyond a demo?

Once you move past small, synchronous requests, the easiest path is to switch to Crawlbase’s Enterprise Crawler. It’s designed for async, high-volume workloads and fits directly into your existing setup.

You don’t need to rebuild anything. Just configure a webhook and add a couple of parameters to your current Crawling API requests.

From there, your pipeline becomes asynchronous. Your agent triggers crawls, and your system processes results as they arrive. Crawlbase continues handling the web access side, so you can focus on making your pipeline more reliable as it scales.