Are you looking for the perfect data collection tool for Booking.com?

Get Crawlbase now!

Create a free account and then apply from the dashboard.

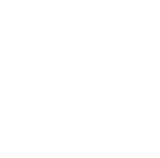

Booking.com is a popular website for online lodging reservations and other travel products. It is available in more than 40 languages and offers millions of accommodation listings including homes, apartments, and other unique places. The site has a user-friendly interface but getting the data you wanted may prove difficult due to their underlying security measures.

If you plan to monitor prices, gather accommodation listings, or analyze big datasets for your projects, you will need a good web crawler to scrape various information efficiently. At Crawlbase, we let you focus on your business needs by providing the most reliable rotating proxy API to bypass any scraper roadblocks like CAPTCHAs, proxy issues, IP blocks, and more.

Highly optimized for crawling and scraping Booking.com content

Send as many API requests as needed. No bandwidth restrictions, guaranteed.

Well-maintained rotating proxies with 99.9 % network uptime.

Enhanced with Artificial Intelligence to avoid bot detection and CAPTCHAs.

No subscription required. Get your 1000 free requests upon signing up!

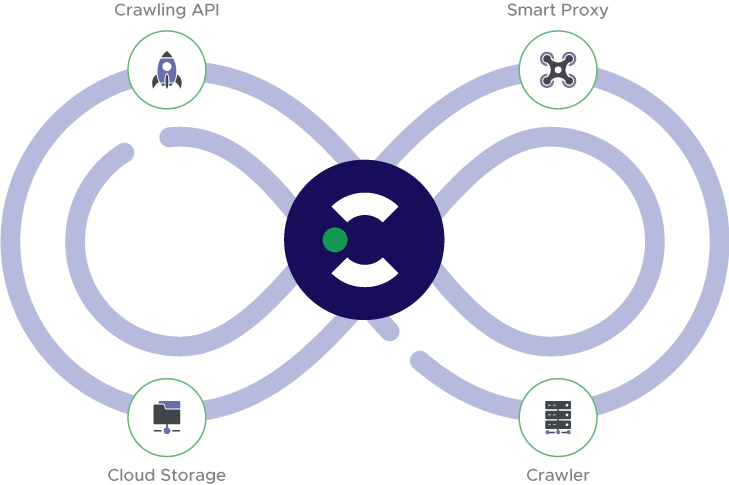

The complete solution for your data collection needs

Easily deployable APIs perfect for beginners and experts, small and big projects.

Crawling API

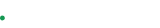

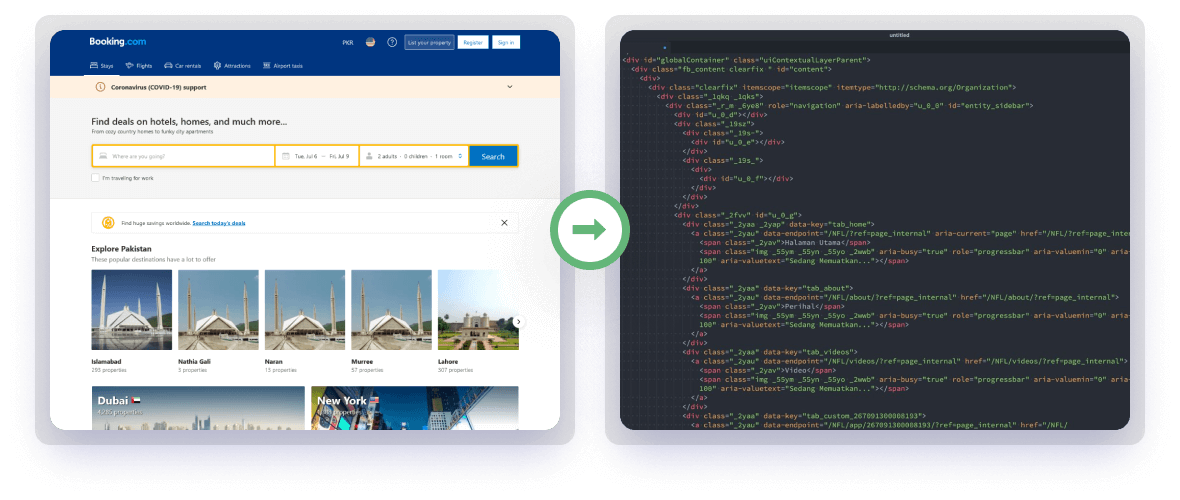

Built on top of thousands of residential and data center proxies, the Crawling API can let you extract the full HTML code or get the parsed content of most websites with ease.

Smart AI Proxy

Cant use an API? Forward your connection requests to a randomly rotating IP address in a pool of quality proxies before reaching the target website using our reverse proxies.

Cloud storage

Crawlbase Cloud Storage handles scaling, backing up, and managing your cloud space securely. It is an easy-to-use API where you can save your crawled or scraped data and screenshots straight to our cloud server.

Crawler

Integrate your system to the crawler and receive asynchronous callbacks to a provided webhook. Push URLs using the Crawling API, and the Crawler will push back the crawled page to your server.

Start crawling and scraping

Just sign up, and you can start scraping in minutes. If you need assistance or expert advice, our team of engineers is always ready to help.

Create Free Account!Gain unrestricted access to data with Crawlbase

We are here to make the internet accessible for everyone. Our service provides unlimited bandwidth with instant access to the most functional API features.

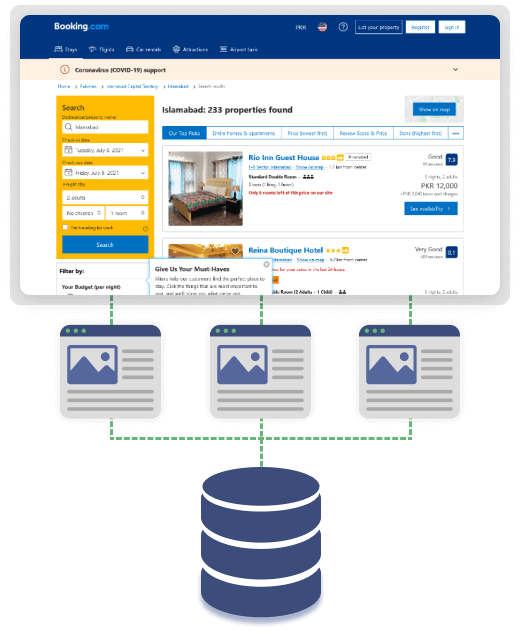

Simple yet highly scalable API for everyone

Utilize the API by itself and crawl individual Booking.com listings, or integrate our API’s base part to your current system and start scraping thousands or even millions of pages automatically.

Compatible with any programming language. Including free-to-use libraries for Python, Node.js, Ruby, PHP, and more.

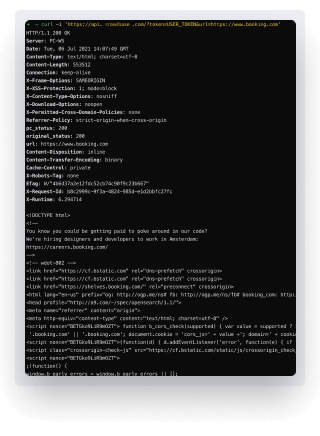

Get your API authentication key by signing up and try your first call with just a simple cURL request:

Frequently Asked Questions

What is the rate limit of your API?

The default rate limit for Booking.com is 20 requests per second. However, if you need to scale it up to meet your production needs, you can easily contact us to discuss your rate limit increase.

What happens if my request fails?

We have a very high success rate in most cases, but in the event your request fails, you can simply retry the request as failed requests are not charged.

Is it possible to have my API request geolocated from a specific country?

Yes, you are free to pass our API’s country parameter on each of your requests. By default, you will have access to 27 countries.

How do we scrape the next page of the search results?

The user will need to use the pagination of each website. For booking.com, you will have to pass the parameters &rows and &offset. For more information, please contact our support team.

Used by the world’s most innovative businesses – big and small

Supporting all kinds of crawling projects

Create Free Account!Customer Success stories

Start crawling and scraping the web today

Create a free account and then apply from the dashboard.