Goodreads stands out as a top online destination for people to share their thoughts on books. With its community of over 90 million signed-up users, the site buzzes with reviews, comments, and ratings on countless books. This wealth of user-created content offers a goldmine to anyone looking to extract valuable information such as book scores and reader feedback.

This post will guide you through making a program to gather book ratings and comments using Python and the Crawlbase Crawling API. We’ll walk you through setting up your workspace, dealing with page-by-page results, and saving the information in an organized way.

Ready to dive in?

Table of Contents

- Why Scrape Goodreads?

- Key Data Points to Extract from Goodreads

- Crawlbase Crawling API for Goodreads Scraping

- Why Use Crawlbase for Goodreads Scraping?

- Crawlbase Python Library

- Installing Python and Required Libraries

- Choosing an IDE

- Inspecting the HTML for Selectors

- Writing the Goodreads Scraper for Ratings and Comments

- Handling Pagination

- Storing Data in a JSON File

- Complete Code Example

Why Scrape Goodreads?

Goodreads is a great place for book lovers, researchers, and businesses. Scraping Goodreads can provide you with a lot of user-generated data, using which you can analyze book trends, gather user feedback, or build a list of popular books. Here are a few reasons why scraping Goodreads can be useful:

- Rich Data: Goodreads provides ratings, reviews, and comments on books, making it an ideal place to understand the preferences of readers.

- Large User Base: With millions of active users Goodreads has a massive dataset, ideal for in-depth analysis.

- Market Research: Data available from Goodreads can be used to help businesses understand market trends, popular books, and customer feedback that can be useful for marketing or product development.

- Personal Projects: Scraping Goodreads can be handy if you are working on a personal project, like building your own book recommendation engine or analyzing reading habits.

Key Data Points to Extract from Goodreads

When scraping Goodreads, you should focus on the most important data points to get useful insights. Here are the key ones to collect:

- Book Title: This is essential for any analysis or reporting.

- Author Name: To categorize and organize books and to track popular authors.

- Average Rating: Goodreads average rating based on user reviews. This is the key to understanding the book’s popularity.

- Number of Ratings: Total number of ratings. How many people have read the book.

- User Comments/Reviews: User reviews are great for qualitative analysis. What did readers like or dislike?

- Genres: Goodreads books are often tagged with genres. Helps to categorize and recommend similar books.

- Publication Year: Useful to track trends over time or compare books published in the same year.

- Book Synopsis: The synopsis provides a summary of the book’s plot and gives context to what the book is about.

Crawlbase Crawling API for Goodreads Scraping

When scraping dynamic websites like Goodreads, traditional request methods struggle due to JavaScript rendering and complex pagination. This is where the Crawlbase Crawling API comes in handy. It handles JavaScript rendering, paginated content, and captchas so Goodreads scraping is smoother.

Why Use Crawlbase for Goodreads Scraping?

- JavaScript Rendering: Crawlbase handles the JavaScript Goodreads uses to display ratings, comments and other dynamic content.

- Effortless Pagination: With dynamic pagination, navigating through multiple pages of reviews becomes automatic.

- Prevention Against Blocks: Crawlbase manages proxies and captchas for you, reducing the risk of being blocked or detected.

Crawlbase Python Library

Crawlbase has a Python library that makes web scraping a lot easier. This library requires an access token to authenticate. You can get a token after creating an account on crawlbase.

Here’s an example function demonstrating how to use the Crawlbase Crawling API to send requests:

1 | from crawlbase import CrawlingAPI |

Note: Crawlbase offers two types of tokens:

- Normal Token for static sites.

- JavaScript (JS) Token for dynamic or browser-based requests.

For scraping dynamic sites like Goodreads, you’ll need the JS Token. Crawlbase provides 1,000 free requests to get you started, and no credit card is required for this trial. For more details, check out the Crawlbase Crawling API documentation.

Setting Up Your Python Environment

Before scraping Goodreads for book ratings and comments, you need to set up your Python environment properly. Here’s a quick guide to get started.

Installing Python and Required Libraries

- Download Python: Go the Python website and fetch the current version made available for your OS. During the installation, remember to add Python to the system PATH.

- Install Python: After that, check that you have successfully installed it by typing in the console or in the command window the following instructions:

1 | python --version |

- Install Libraries: With the use of

pip, install and import required libraries includingcrawlbasein order to make an HTTP request using Crawlbase Crawling API, and theBeautifulSoupfrom the bs4 library to parse web pages:

1 | pip install crawlbase |

Choosing an IDE

A good IDE simplifies your coding. Below are some of the popular ones:

- VS Code: Simple and lightweight, multi-purpose, free with Python extensions.

- PyCharm: A robust Python IDE with many built-in tools for professional development.

- Jupyter Notebooks: Good for running codes with an interactive setting, especially for data projects.

With your environment ready, you can now move on to scraping Goodreads.

Scraping Goodreads for Book Ratings and Comments

While web scraping book ratings and comments from Goodreads, one must take in account the fact that the content is in constant change. The comments and reviews are loaded both asynchronously and the pagination is done through buttons. This part describes how to get this information and work with pagination through Crawlbase utilizing a JS Token and css_click_selector parameter for button navigation.

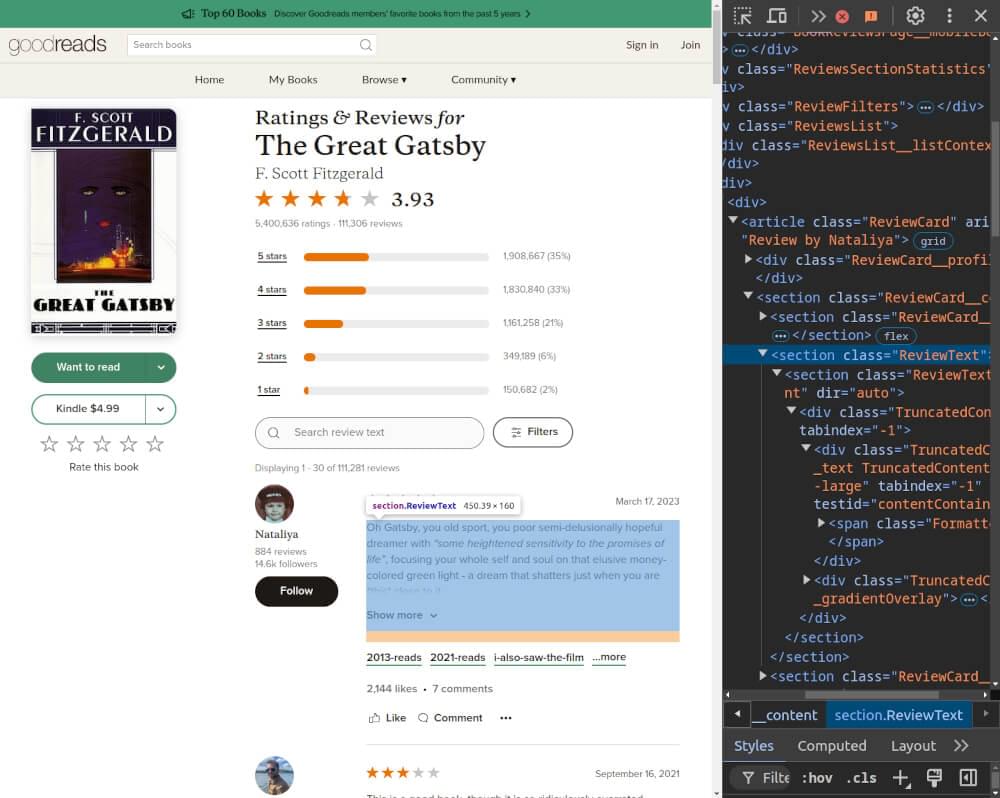

Inspecting the HTML for Selectors

First of all, one must look into the HTML code of the Goodreads page on which you want to scrape. For example, to scrape reviews for The Great Gatsby, use the URL:

1 | https://www.goodreads.com/book/show/4671.The_Great_Gatsby/reviews |

Open the developer tools in your browser and navigate to this URL.

Here are some key selectors to focus on:

- Book Title: Found in an

h1tag with classH1Title, specifically in an anchor tag withdata-testid="title". - Ratings: Located in a

divwith classRatingStatistics, with the value in aspantag of classRatingStars(using thearia-labelattribute). - Reviews: Each review is within an

articleinside adivwith classReviewsListand classReviewCard. Each review includes:- User’s name in a

divwithdata-testid="name". - Review text in a

sectionwith classReviewText, containing aspanwith classFormatted.

- User’s name in a

- Load More Button: The “Show More Reviews” button in the review section for pagination, identified by

button:has(span[data-testid="loadMore"]).

Writing the Goodreads Scraper for Ratings and Comments

Crawlbase Crawling API provide multiple parameters which you can use with it. Using Crawlbase’s JS Token, you can handle dynamic content loading on Goodreads. The ajax_wait and page_wait parameters can be used to give the page time to load.

Here’s a Python script to scrape Goodreads for book details, ratings, and comments using Crawlbase Crawling API.

1 | from crawlbase import CrawlingAPI |

Handling Pagination

Goodreads uses a button-based pagination system to load more reviews. You can use Crawlbase’s css_click_selector parameter to simulate clicking the “Next” button and scraping additional pages of reviews. This method helps you to collect the maximum number of reviews as possible.

Here’s how the pagination can be handled:

1 | def scrape_goodreads_reviews_with_pagination(base_url): |

Storing Data in a JSON File

After extracting the book details and reviews you can write the scraped data into a JSON File. This format is perfect for keeping structured data and very easy to process for later use.

Here’s how to save the data:

1 | # Function to save scraped reviews to a JSON file |

Complete Code Example

Here is the complete code that scrapes Goodreads for book ratings and reviews, handles button-based pagination, and saves the data in a JSON file:

1 | from crawlbase import CrawlingAPI |

By using Crawlbase’s JS Token and handling button-based pagination, this scraper efficiently extracts Goodreads book ratings and reviews and stores them in a usable format.

Example Output:

1 | { |

Final Thoughts

Scrape Goodreads for book ratings and comments and get valuable insights from readers. Using Python with the Crawlbase Crawling API makes it easier especially when dealing with dynamic content and button-based pagination on Goodreads. With us handling the technical complexities you can focus on extracting the data.

Follow the steps in this guide and you’ll be set up and scraping reviews and ratings and storing the data in a structured format for analysis. If you want to do more web scraping, check out our guides on scraping other key websites.

📜 How to Scrape Monster.com

📜 How to Scrape Groupon

📜 How to Scrape TechCrunch

📜 How to Scrape Clutch.co

If you have questions or want to give feedback our support team can help with web scraping. Happy scraping!

Frequently Asked Questions

Q. What is the best way to scrape Goodreads for book ratings and comments?

Best way to scrape Goodreads is by using Python with Crawlbase Crawling API. This combination allows you to scrape dynamic content like book ratings and comments. Crawlbase Crawling API can handle JavaScript rendering and pagination so you can get all the data without any issues.

Q. What data points can I extract when scraping Goodreads?

When scraping Goodreads you can extract following data points: book titles, authors, average ratings, individual user ratings, comments, total reviews. This data will give you insights on how readers are receiving books and help you in making informed decisions for book recommendations or analysis.

Q. How does pagination work when scraping reviews from Goodreads?

Goodreads uses button-based pagination to load more reviews. By using Crawlbase Crawling API you can click the “Next” button programmatically. This way all reviews will be loaded and you can get complete data across multiple pages without manually navigating the site. You can set parameters like css_click_selector in the API call to handle this.